IT Pro Verdict

Suited to top-of-rack or aggregation deployments, the S4820T packs crams features into its 1U chassis. It offers an affordable server and storage migration route to copper 10GbE and support for OpenFlow means it’s SDN ready out of the box.

Pros

- +

Low cost per copper 10GbE port; Quad 40GbE uplinks; VLT and OpenFlow support; L3 routing included

Cons

- -

CLI configuration access

Migrating data centre servers to 10-Gigabit (10GbE) is expensive but Dell's S4820T switch aims to offer businesses a lower cost alternative. Not only does this 1U switch cram in 48 10GBaseT ports but it augments them with four 40-Gigabit (40GbE) fibre uplinks.

A primary role for the S4820T is to connect servers and storage over copper 10GbE. It's part of Dell's new networking portfolio where fixed form factor switches are replacing traditional chassis-based switches in the data centre as more flexible alternatives.

The S4820T focuses on emerging software defined networks (SDNs) which have been developed to cope with the rapidly changing traffic patterns in data centres. Hardware ready and loaded with the latest FTOS 9.2 software, the S4820T fully supports OpenFlow an open standard that controls how traffic flows through a network fabric and does away with protocols such as RSTP.

It also slots in seamlessly with spine and leaf network models. Dell's flagship Z9000 40GbE switches provide the spine and multiple S4820T switches, as well as Dell's other S-series switches, connect as leaf nodes over redundant 40GbE links.

The S4820T has dual redundant PSUs and cooling modules for standard or reverse air flows

Hardware features

Under the hood are sixteen 40GbE chips where four are used for the high-speed uplinks with the other twelve providing four 10GbE ports each. If you want more 10GbE you can use the 40GbE QSFP+ ports with breakout cables to split them out to four fibre 10GbE ports.

Hardware redundancy is good as the S4820T has two hot-plug 460W PSUs. Cooling is handled by two hot-plug fans modules designed to work with the hot-aisle/cold-aisle cooling flows used in the latest data centres. Dell offers two cooling modules that provide either standard or reverse air flows.

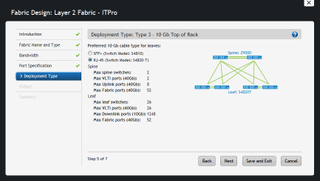

Dell's free AFM software provides wizards to help design and deploy network fabrics

Stacks or VLTs?

The S4820T supports stacking and Dell's VLT (virtual link trunking) but there are distinct differences between the two technologies. You can place up to six switches in a stack using either 40GbE uplinks or multiple 10GbE ports.

The stack is managed as a single entity but this is no different to any other vendor's stacking solution and is not ideal in a data centre. The stack comprises a master and up to five slaves but if you need to reboot the master switch then the whole stack will need restarting.

Dell's VLT allows two switches to be placed in a high availability domain where they are connected using a minimum of two 40GbE ports. In a VLT domain both switches are active, they share the same virtual MAC address and use their OOB (out-of-band) management ports for heartbeat functions. If one goes down the other assumes all primary functions and carries on regardless with no loss of service.

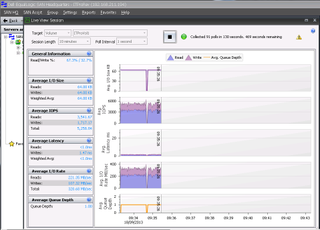

From SAN HQ we could see a momentary pause in traffic flow as the VLT domain reconfigured itself after a switch failure

VLT testing

To test the VLT feature we used a pair of S4820T switches and had to quickly get acquainted with their CLI as they don't have a web GUI. Dell's thinking here is its Active Fabric Manager (AFM) is sufficient for general switch management.

From the CLI on each switch you create a VLT domain and assign logical port channels to it. Next, you define the physical ports, which will be members of the channel and then provide one virtual MAC address for both switches.

To generate traffic throughput we connected an EqualLogic PS6100 IP SAN array to both switches with redundant links. On the other side was a PowerEdge R720 server connected to the storage array using MPIO links across both switches.

Running Iometer to generate a load on the iSCSI targets, we rebooted one of the S4820T switches from its CLI. There was a brief pause while the operational switch assumed all duties but we could see from Dell's SAN HeadQuarters and Iometer that traffic continued to flow between the server and storage array.

Another feature is iSCSI Optimisation where the switch recognises Dell's Compellent and EqualLogic storage arrays. Using a preconfigured CLI enabler command, the switch will auto-configure flow control, Jumbo frames and CoS prioritisation for the array.

Dell's OpenManage provides full monitoring and alerting facilities for all S-series switches

Deploy and manage

Dell's AFM is a free VMware VM running CentOS used for provisioning switches in a network fabric. The AFM console is well designed and provides wizards for creating virtual switch fabrics. Configurations can be saved off as templates and once verified by AFM, can be deployed to all switches in the new fabric.

General switch management and monitoring are handled by Dell's OpenManage Network Manager (OMNM). Using SNMP and S-Flow, it provides views of the physical status of each switch within the fabric and their individual ports.

The home page opens with an overview of all managed resources along with alerts for alarms and faulty nodes. Switch performance can be monitored in detail and you can pull up views of traffic throughput plus the top talkers and protocols. Conclusion

Dell's new S4820T offers data centres an affordable option for upgrading their servers and storage to 10GbE. Being open standards based and offering full support for OpenFlow, it's also ready for the next generation software defined networks. Unlike other switch vendors, the S4820T includes also supports Layer 3 routing protocols as standard and not as an optional (and expensive) upgrade.

Verdict

Suited to top-of-rack or aggregation deployments, the S4820T packs crams features into its 1U chassis. It offers an affordable server and storage migration route to copper 10GbE and support for OpenFlow means it’s SDN ready out of the box.

Chassis: 1U rack

Ports: 48 x 10GBaseT, 4 x 40-Gigabit QSFP+

Backplane: 1.28 Tbps full duplex

Forwarding capacity: 960 Mpps

Power: 2 x 460W hot-plug PSUs

Cooling: 2 x dual fan standard or reverse flow modules

Dave is an IT consultant and freelance journalist specialising in hands-on reviews of computer networking products covering all market sectors from small businesses to enterprises. Founder of Binary Testing Ltd – the UK’s premier independent network testing laboratory - Dave has over 45 years of experience in the IT industry.

Dave has produced many thousands of in-depth business networking product reviews from his lab which have been reproduced globally. Writing for ITPro and its sister title, PC Pro, he covers all areas of business IT infrastructure, including servers, storage, network security, data protection, cloud, infrastructure and services.