IT Pro Verdict

The Quattro 1396-T squeezes 24 Xeon E3 V3 servers into 3U of rack space making it ideal for cloud services and web hosting. Build quality is solid, all nodes can be remotely managed and it’s great value.

Pros

- +

High server density; Innovative design; Good value; Remote management

Cons

- -

Noisy cooling fans

Multi-node servers are more popular than blade servers as you can maximize rack density for essential cloud services. Boston's latest Quattro 1396-T shows off these advantages as it delivers 24 compute nodes in a compact 3U chassis and all at a tempting price.

The 1396-T showcases Supermicro's ongoing MicroCloud initiative where this manufacturer is always on the look-out for new ways of cramming more servers into less space. It's evolved from the Quattro 1332-T we reviewed in 2012, which has eight Xeon E3 server nodes in 3U of rack height.

The 1396-T has tripled the node count as each motherboard two separate servers. Each has its own network ports and, unlike the 1332-T, the sleds have room for two internal SFF hard disks per node.

The chassis is a passive shell with four big cooling fans and two whopping 2KW Platinum rated PSUs linked to a power backplane. The power cables are routed to the front so you don't need to go rummaging around at the back to swap out a faulty unit.

One motherboard but two independent servers with their own network ports

Sled design

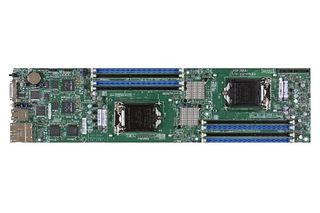

The sleds use Supermicro's proprietary X10SLE-DF motherboard. It has two processor sockets and the review system was supplied with six servers sporting 3.1GHz E3-1220 V3 Xeons.

Board design is tidy as the components are neatly laid out down their length to ensure optimum airflow. The processors are mounted with chunky passive heatsinks and flanked down opposite sides by four DIMM slots.

Each node gets dual Gigabit ports which are accessed from the front. There's also a KVM port for attaching a local keyboard, monitor and mouse using the supplied cable which also includes a serial and two USB 2 ports.

The sleds are easy to remove and replace using the locking tab and handle. All they have at the back is a small edge connector which draws power from the backplane and provides fan control data.

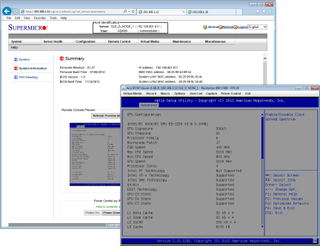

Each node can be remotely managed and controlled via the sled's shared RMM port

Storage and expansion

There's room at the back for dual stacks of two SFF SATA hard disks which plug directly into a daughterboard. The two fore drives are routed to the first node with the two aft drives assigned to the second node.

Each node gets an Intel C224 chipset which supports SATA III hard disks. It can manage stripes or mirrors which is fine as each node can't have more than two hard disks.

With space at a premium there's no room for any expansion slots. The board also doesn't have an internal USB port or SD card slot for booting from an embedded hypervisor.

The memory slots support 8GB DIMMs so each node can be pushed to a maximum of 32GB. The price of the review system includes three populated sleds each with 16GB of 1600MHz DDR3 per node.

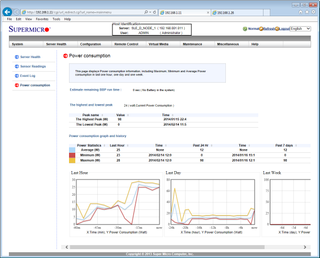

You can remotely control node power and the browser interface also provides graphs of consumption

Node operation and noise

Each sled has a tiny power button, which will turn noes on and off. It's well hidden behind the sled's locking tab so there's no chance it'll be accidentally pressed.

Local access is easy enough using the KVM cable and a large button in the middle of the sled's end plate is used to swap control between each node. Usefully, the KVM button lights green for node 1 control and amber for node 2.

Apart from the hard disks, the sleds have no moving parts as all cooling is handled by the chassis. There is a downside to this. Even on the optimum setting, it sounds like a passenger jet waiting to take off so a full rack of these systems will be deafening.

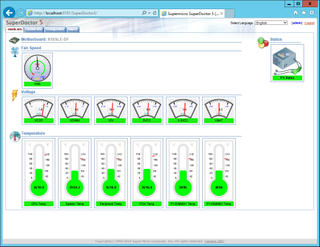

The SuperDoctor 5 SNMP management service monitors node operations and can send email alerts

Power consumption and remote management

Boston supplied the review system with three fully populated sleds and the remaining slots had sleds with unpopulated motherboards. With all sleds powered off, we measured a baseline of 270W for the chassis and fans (170W with all nodes unplugged).

With each of the three nodes running Windows Server 2012 and idling along, we measured two, four and six nodes draw a total of 310W, 340W and 360W. Using the Sisoft Sandra benchmarking app to push the CPUs to maximum load on each node we saw power peak at 420W, 550W and 660W respectively.

Each node has an embedded RMM chip which share a dedicated network port on the sled. Each node has its own RMM IP address so you can remotely access it via a web browser.

The web interface is basic but provides sensor readouts for all key components and their thresholds can be tied in with SNMP traps and email alerts. Node power can be managed from the console and you also get full remote control and virtual media services as standard.

Conclusion

The Quattro 1396-T pushes rack density to the next level and has a competitive price. Boston advised us than a fully populated system with all 24 nodes having the same specification as the six in the review system costs 16,999 ex VAT which equates to only 708 per server.

Verdict

The Quattro 1396-T squeezes 24 Xeon E3 V3 servers into 3U of rack space making it ideal for cloud services and web hosting. Build quality is solid, all nodes can be remotely managed and it’s great value.

Chassis: 3U rack

Power: 2 x 2000W hot plug PSUs

Sled bays: 12 hot-swap

Three hot-swap sleds each with the following:

Motherboard: Supermicro X10SLE-DF

CPU: 2 x 3.1GHz E3-1220 V3

Memory: 16GB 1600MHz DDR3 per node (max 32GB)

Storage: 6 x 500GB Seagate Constellation SATA III (max 2 per node)

RAID: Intel C224

Array support: RAID0, 1

Network: 4 x Gigabit (2 per node)

Management: Embedded RMM with 10/100 port (shared)

Software: Supermicro SuperO Doctor 5 and IPMI View 2

Warranty: 3yrs on-site NBD

Dave is an IT consultant and freelance journalist specialising in hands-on reviews of computer networking products covering all market sectors from small businesses to enterprises. Founder of Binary Testing Ltd – the UK’s premier independent network testing laboratory - Dave has over 45 years of experience in the IT industry.

Dave has produced many thousands of in-depth business networking product reviews from his lab which have been reproduced globally. Writing for ITPro and its sister title, PC Pro, he covers all areas of business IT infrastructure, including servers, storage, network security, data protection, cloud, infrastructure and services.