Facebook’s AI image recognition research aimed at increasing privacy not reducing it

The system subtly distorts images in videos to hinder image recognition systems

Facebook has created an artificial intelligence system that works to de-identify people, rather than analyse images for facial recognition, such as is the norm with such technology.

While Facebook has previously used facial recognition tech to support automated photograph tagging on its platform, it has stopped doing so by default as part of a move to improve the privacy of its users.

But now it's potentially going a step further and using the same AI-powered technology to counteract facial-recognition systems.

It does this by combining a trained face classifier and an adversarial auto-encoder. The system maps a person's face creating a true image of their appearance and a mask used to hinder and distort identifying parameters of a person's face.

These images can then be used in a video of a person, which has the result of showing an image of a person that's easily identifiable to someone who knows them but has a suite of distortions and almost imperceivable changes to it. As a result, a facial recognition system being applied to the video will have trouble correctly identifying the person who is the subject of the video.

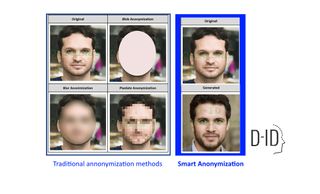

"Our approach is called Smart Anonymization and it works by removing facial images from videos and still images, and replacing them with computer-generated, photorealistic faces of nonexistent people," explained Facebook's researchers. "The anonymized faces preserve the key, non-identifying attributes of the original faces, ie age, gender, expression, gaze direction, motion etc., but remove all other personally identifying information."

As this AI tech is the fruit of Facebook's research division, it's not likely to be out to commercial use or get integrated into Facebook's main social network platform for some time.

Get the ITPro. daily newsletter

Receive our latest news, industry updates, featured resources and more. Sign up today to receive our FREE report on AI cyber crime & security - newly updated for 2024.

But it does demonstrate how the technology used to identify people can be essentially reversed and used to obfuscate their identity from AI-powered technology. Such technology could help curtail the use of deep fakes, whereby an AI system can superimpose the image of one person over another, potentially giving the impression of them doing something they weren't or putting them in a compromising position; the placing of Hollywood actors into pornographic videos is one such example.

As such, a technology currently seen by some as an invasion of privacy could end up aiding privacy in a potentially ironic turn of fate.

Roland is a passionate newshound whose journalism training initially involved a broadcast specialism, but he’s since found his home in breaking news stories online and in print.

He held a freelance news editor position at ITPro for a number of years after his lengthy stint writing news, analysis, features, and columns for The Inquirer, V3, and Computing. He was also the news editor at Silicon UK before joining Tom’s Guide in April 2020 where he started as the UK Editor and now assumes the role of Managing Editor of News.

Roland’s career has seen him develop expertise in both consumer and business technology, and during his freelance days, he dabbled in the world of automotive and gaming journalism, too.